Agents Commander: A Lightweight Interface for Multi-Agent CLI Workflows

Someone once said: For over 2,000 years, we still haven’t solved communication problems between humans.

In general, that still feels true.

Beyond language barriers, misunderstanding remains one of the most common causes of problems in both business settings, as well as our everyday life. Human spoken language is chaotic. There are whispers, gossip, noise, assumptions, emotions, shortcuts, and context gaps everywhere. If you listen carefully, most sentences are short, imprecise, and heavily dependent on what the speaker already knows, feels, or assumes. The same sentence can be interpreted in completely different ways from what was intended.

Structured communication in AI

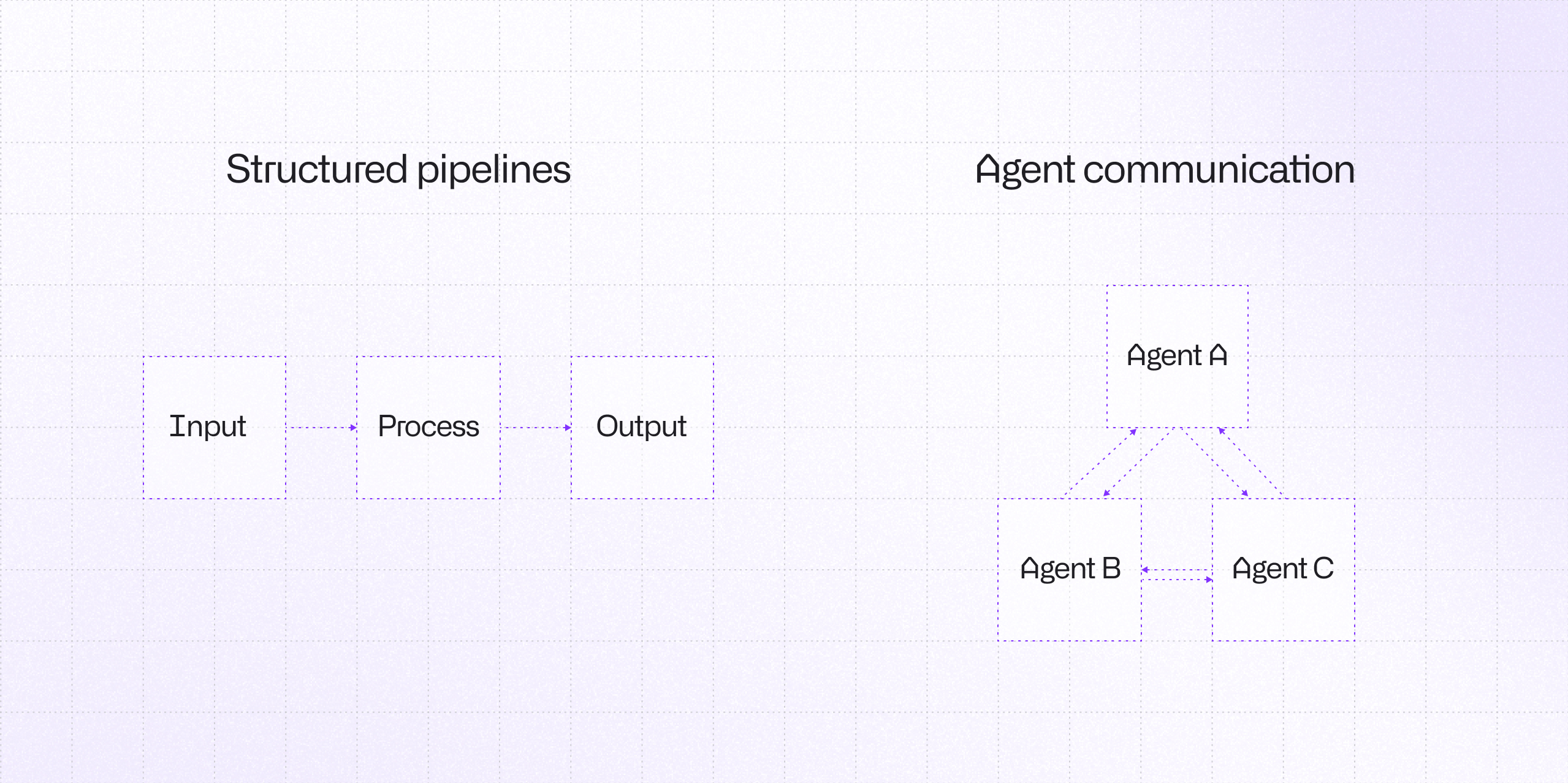

In AI systems, we aim to build communication between agents that is not chaotic.

Today, that communication is usually based on hyper-precise structures like JSON. We use MCP, LangGraph, LangChain, APIs, schemas, and formal protocols. That makes sense from an engineering perspective, but I sometimes wonder whether this is also limiting broader research into AGI. Maybe intelligence does not emerge only from rigid structure. Maybe some part of it emerges from imperfect exchange, negotiation of meaning, and adaptation through communication itself.

LLM workflows in CLI environments

In my daily work, I constantly switch between agentic CLIs and apps like Codex and Claude. I use all of them heavily for development and experimentation. However, I'm starting to feel that we are living through something like the early 1990s of computer science again: a new beginning.

LLMs feel like a non-linear phase transition in how humans interact with computers. I remember classical hardware eras: floppy disks, CDs, eject buttons, file managers. Today, I change local LLM models on my DGX almost like swapping floppies or CDs in a drive.

In LM Studio, there is even an eject-style button that looks like it came from an old CD-ROM. There is something strangely old-school and exciting about all of this: fancy but raw CLIs, hacker-like workflows, terminals full of numbers and characters, huge datasets streaming through text interfaces, and hardware that feels almost neural-native. These are all signs that a new computer era has begun.

Agents Commander CLI interface

That is why I decided to vibe-code a classical Norton Commander-style interface, just to regain control over all my agents working through CLI, while keeping visibility over the file structure. I also wanted a practical way to inspect markdown files, skills, and important artifacts without constantly switching windows and losing context.

When I finished the first prototype, I called it Agents Commander. Initially, it was simply a way to manage a multi-agent CLI environment.

But then I had another thought:

If every panel contains a different agent, maybe they should be able to communicate with each other.

That led me to implement what I call the Commander Protocol.

It is an extremely simple way for agents to communicate inside Agents Commander. Once an agent learns the protocol, it knows how to send messages to other agents in nearby panels. The proof of concept relied ona single marker format:

===COMMANDER:SEND:<agent_type>:<panel>=== Please write unit tests for the auth module. ===COMMANDER:END===

I built a lightweight observer to detect these markers, with simple stdout watching and stdin injection orchestration. It worked: Agents from different vendors started communicating with each other.

Agent collaboration and workflow experiments

I couldn’t resist pushing it further, so I wrote a small library of prompts for collaborative tasks. One of my favorite experiments is Philosophical Discussion, where agents debate and refine ideas together. What makes this experiment useful is that it exposes how agents negotiate meaning in practice, not just how they follow predefined structures. You can observe disagreement, refinement, and convergence in real time, which is hard to capture in typical pipeline-based systems. It turns communication itself into something you can test, not just assume.

At some point I realized this might be more than just a handy feature. It could be a unique approach to inter-agent communication.

What fascinates me is that this opens a different path from MCP or LangChain-style orchestration. It allows us to test communication processes directly, build pipelines on top of them, and delegate work across agents without servers, heavy configurations, complex APIs, or rigid schemas.

Agents Commander is still early, but it already solves a real problem for me: managing a multi-agent CLI workflow in a lightweight, visible, and practical way. I deliberately didn’t want the source code to exceed 1.44 MB (boomers know why).

If you are an AI researcher and want to study agent communication through a minimal protocol, or if you want to build agent pipelines in a way that is lighter and more experimental than MCP, then Agents Commander may be interesting to you.

We can train models to not only use communication protocols, but to understand them and adapt them during interactions.

Implications for AGI communication

Maybe this is only an invention. Maybe it is a discovery or an interface pattern. Or maybe it is a tiny clue about how future AGI systems could learn to communicate more naturally? Only time will tell.

Learn more about AI

Here's everything we published recently on this topic.